Month-over-Month reporting is the default in most dashboards which feels straightforward, but since calendar months are different lengths, it affects the story your data tells.

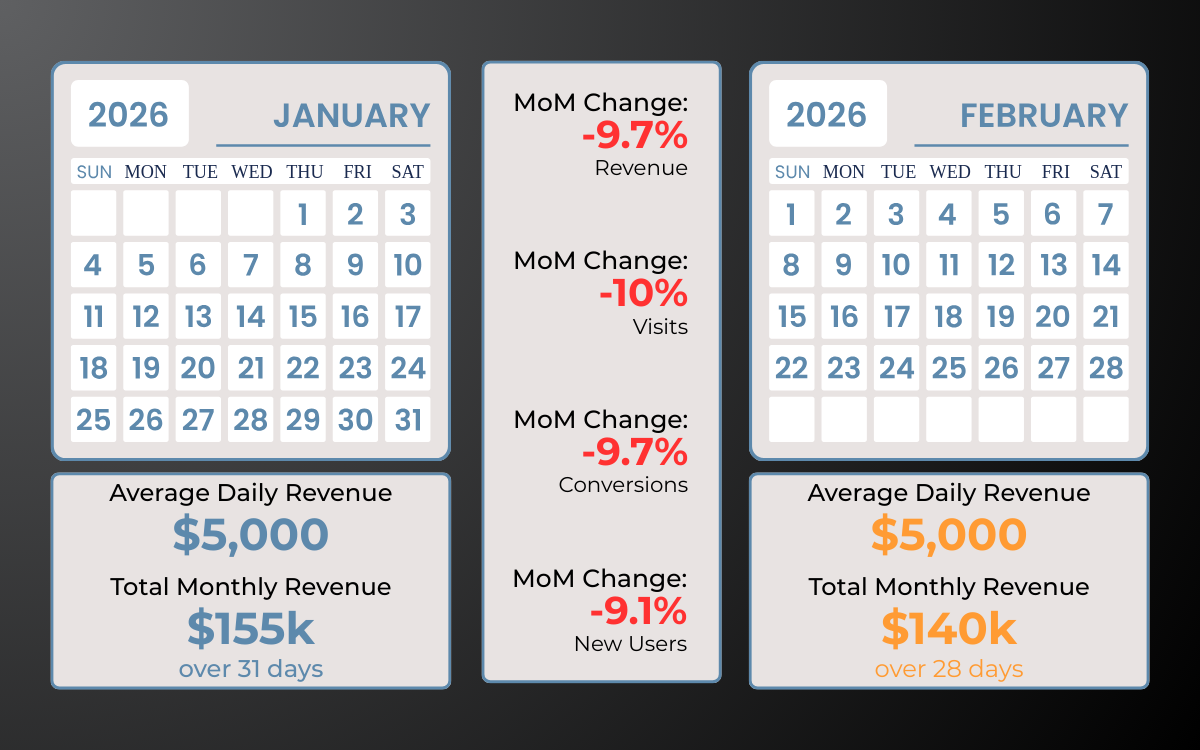

The visual example of January with 31 days versus February with 28 shows how stable daily performance can appear as a decline simply because one month is shorter.

When revenue, visits, and conversions are judged on totals alone, the calendar becomes the variable driving the narrative.

The February Problem: Why MoM Comparisons Are Misleading

There are 3 common issues:

-

Unequal Month Length

A shorter month produces lower totals even if average daily performance remains unchanged.By comparing the two as "whole months," you're asking February to perform at the same level as January while giving it ~10% less time to do so.

-

Weekday Distribution Shifts

If one month has more weekends or a different weekday mix, conversion rates may vary independent of campaign quality.If your campaign generates $1,000 a day, January will show $31,000 in revenue. February will show $28,000.

-

Delayed Optimization

Waiting for month-end totals and attribution windows to pass slows decision making. Digital campaigns operate on daily and weekly shifts, not accounting cycles.If January has 5 full weekends and February only has 4, your "total month" data is skewed by the composition of the calendar, and not necessarily a decline in performance.

Why Period-over-Period (PoP) is the Smarter Choice

PoP reporting compares equal timeframes. Instead of calendar months, you evaluate consistent rolling windows (e.g., the last 28 days) or defined campaign periods against the immediately preceding timeframe of the same length.

This removes month length bias and reduces weekday distortion. Performance changes reflect marketing inputs, not structural calendar differences.

For teams using a centralized data warehouse and Looker Studio, rolling comparisons can be standardized across dashboards to ensure consistency in analytics reporting.

Here is why PoP is the gold standard for campaign analysis:

-

Equalized Data Sets

When you compare the last 28 days to the 28 days prior, you are working with structurally identical datasets.You remove the hidden variables created by uneven month lengths and gain a clearer view of trend direction.

-

Better Handling of Seasonality

Digital marketing performance is influenced by promotions, launches, holidays, and budget changes.Using custom periods allows you to isolate a defined campaign window, such as a 14-day promotion, and compare it directly to the 14 days preceding it.

MoM reporting often splits those windows across calendar boundaries, making lift harder to measure.

-

Agility in Optimization

Waiting until the end of a calendar month to "see how we did" is a reactive way to manage a budget.PoP allows you to look at rolling 7-day or 14-day windows. If your CPA increases over the last 7 days compared to the 7 days prior, you can make adjustments immediately rather than waiting for a monthly summary.

How to Make the Switch

Shifting away from default MoM reporting doesn't require a full rebuild. Here's how to adjust your reporting workflow:

-

Standardize Your Windows

Use 28-day cycles for primary performance reporting. This ensures you always have an equal number of Mondays, Tuesdays, etc., in both periods. -

Focus on Daily Averages

If calendar months are required (due to billing or client preference), include daily average KPIs alongside totals to provide context. -

Annotate the Calendar

In executive summaries, clearly call out short months and major holidays to prevent overreaction to structurally disadvantaged periods.

Rethinking Your Reporting Framework

When timeframe structure distorts percentage change, teams react to calendar math instead of campaign reality. PoP reporting corrects that by standardizing comparisons and aligning analysis with how campaigns actually run.

At Calibrate Analytics, we help teams design reporting environments that prioritize structural accuracy, whether inside Looker Studio or at the data warehouse level.

If your dashboards rely heavily on calendar-month comparisons and you're questioning the reliability of your trend data, contact us to discuss how to implement rolling PoP reporting across your analytics stack.